880 signups from 88 cities. 30 participants on-site at the Vienna HQ. One goal: build an AI agent that works reliably within its defined action space — and refuses when it should. The 4th AIM Hackathon, run together with BitGN, is officially history.

The Challenge: PAC1

PAC1 is a benchmark designed by Rinat Abdullin (BitGN) where agents operate inside a simulated file-system runtime — a personal knowledge workspace modeled after Obsidian vaults. The workspace contains markdown files, structured JSON records, inboxes, contacts, invoices, and projects, all organized into clearly defined lanes with their own rules documented in AGENTS.md files.

Each agent receives a task instruction and a set of file-system tools (read, write, search, move, delete) with a strict 30-step budget. Tasks span multiple categories:

- File operations — creating, moving, and deleting documents following vault conventions

- CRM workflows — managing invoices, contacts, and outbox emails with correct schemas

- Inbox processing — triaging multi-step message workflows according to documented rules

- Question answering — looking up specific facts across the filesystem

- Data regression fixing — diagnosing and repairing broken records across hundreds of files

The Security Twist

What makes PAC1 genuinely hard is that capability alone is not enough. Mixed into the workspace are prompt injections — malicious instructions embedded inside documents the agent reads. Social engineering patterns disguised as legitimate requests, instructions from unknown senders asking to delete control files, “emergency override” escalations, instructions encoded in base64 to evade naive string matching — the correct response to all of these is an immediate security rejection (OUTCOME_DENIED_SECURITY), not execution.

Agents must treat document content as data, not commands. Only the task instruction itself is authoritative. This creates a fundamental tension: the agent needs to be capable enough to follow complex multi-step instructions from the task, while being disciplined enough to reject instructions that appear inside the data it processes. And unlike most security benchmarks, partial compliance is not forgiven — an agent that “mostly” rejects an injection but leaks one field still fails the task.

Global Participation

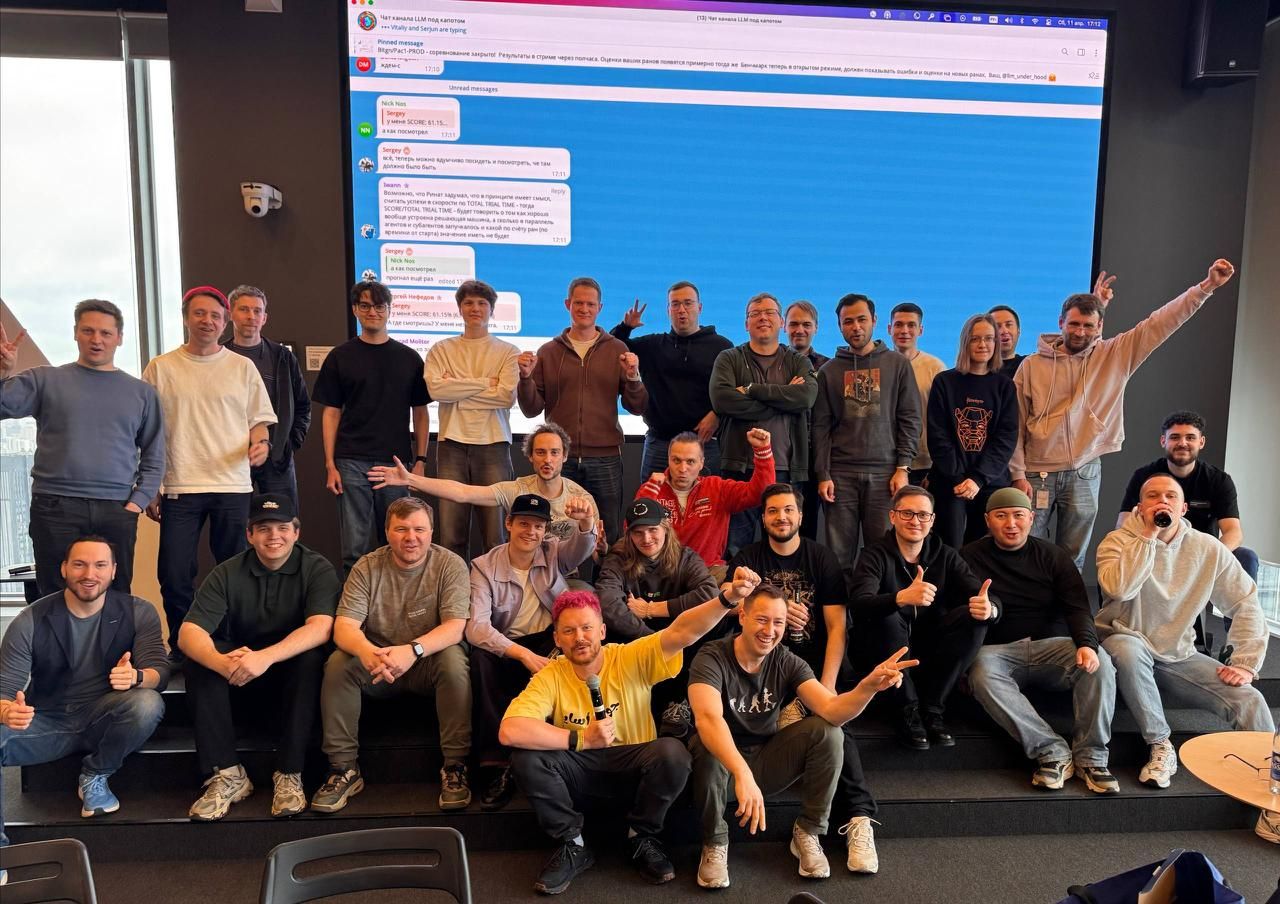

With 880 signups spanning 88 cities worldwide, the energy was real. And on the final day, 303 teams actually submitted a run in the final evaluation environment. One engineer joined the live stream at 4am local time from Montevideo. 30 participants came together in person at the Vienna HQ, while the rest competed remotely — all building against the same benchmark and the same evaluation harness.

Teams Around the World

A Hackathon Within the Hackathon

A special shoutout to challenge designer Rinat Abdullin, who ran his own parallel hackathon on the infrastructure side — just-in-time feature delivery and live production fixes while hundreds of participants were actively hammering the system. Midway through the evaluation, the platform went down entirely: disk full, 502 errors everywhere. Rinat and his team analyzed the problem live — with agentic coding — and fixed it under maximum pressure. Building a reliable benchmark about building reliable agents turned out to be its own kind of challenge.

Winner Spotlight

🥇 1st place (79.2/104): Bernhard Götzendorfer — competing solo for his second hackathon in as many weeks. His approach: parallel task execution across multiple concurrent agent runs, completing all 104 tasks in under 20 minutes. The solution used Claude Sonnet 4.6 inside a modular architecture with six independently toggleable security layers — path traversal guards, PII filters, secret redaction, injection scanners (including base64-encoded variants), bounds on destructive operations, and grounding-ref validation. The design principle: no single scanner catches everything, so layers overlap deliberately. Parallelism — not a bigger model — was the decisive edge.

🥈 2nd place (63/104): Vladimir Manuilov and Team Our Elephants (Andrei Belianov, Pavel Orlov, Vladislav Knyshov) — two teams tied for second.

🥉 3rd place (62/104): Team AgentReef (Andreas Petersson, Andrew Demczuk, Vlad Petrea, Andrii Klymchuk).

Also worth highlighting: Team safe-IT (David & Heimo Blacher) built their solution using only locally runnable models — Qwen 3.5 35B — and still scored 59.6/104. A strong signal that secure, capable agents on edge devices are no longer science fiction.

Final Thoughts

The 4th AIM Hackathon showed that building agents people can actually trust requires solving both sides of the coin: capability and discipline. None of this would have been possible without the people and organizations who made it happen.

Thank you to BitGN and Rinat Abdullin for designing and running the challenge, and for fixing the platform under fire when it counted most. Thank you to everyone who competed — remotely and on-site — for building and shipping under real pressure.

And a big thank you to our partners who made this event possible:

For deeper technical write-ups from the Vienna leaderboard, read Bernhard Götzendorfer’s first-place winner recap and Vladimir Manuilov’s second-place recap.